How I Use Claude Code at Stellar

In January 2026, our company, Stellar Development Foundation, launched an AI Software Engineer Guide with six levels of AI usage maturity.

- Levels 1–2: treat AI primarily as a typist or search tool, such as IDE auto-completion or basic query answering.

- Level 3: uses AI as a co-author, providing clear instructions to complete small, well-scoped tasks.

- Level 4: represents basic agentic use, where AI can execute a small project under human guidance.

- Level 5: introduces intermediate agentic workflows with feedback loops - the AI proposes a plan, the human approves it, and the AI executes, tests, and summarizes the results.

- Level 6: reflects advanced agentic use, where humans define objectives, constraints, and success criteria, and AI operates independently for extended periods.

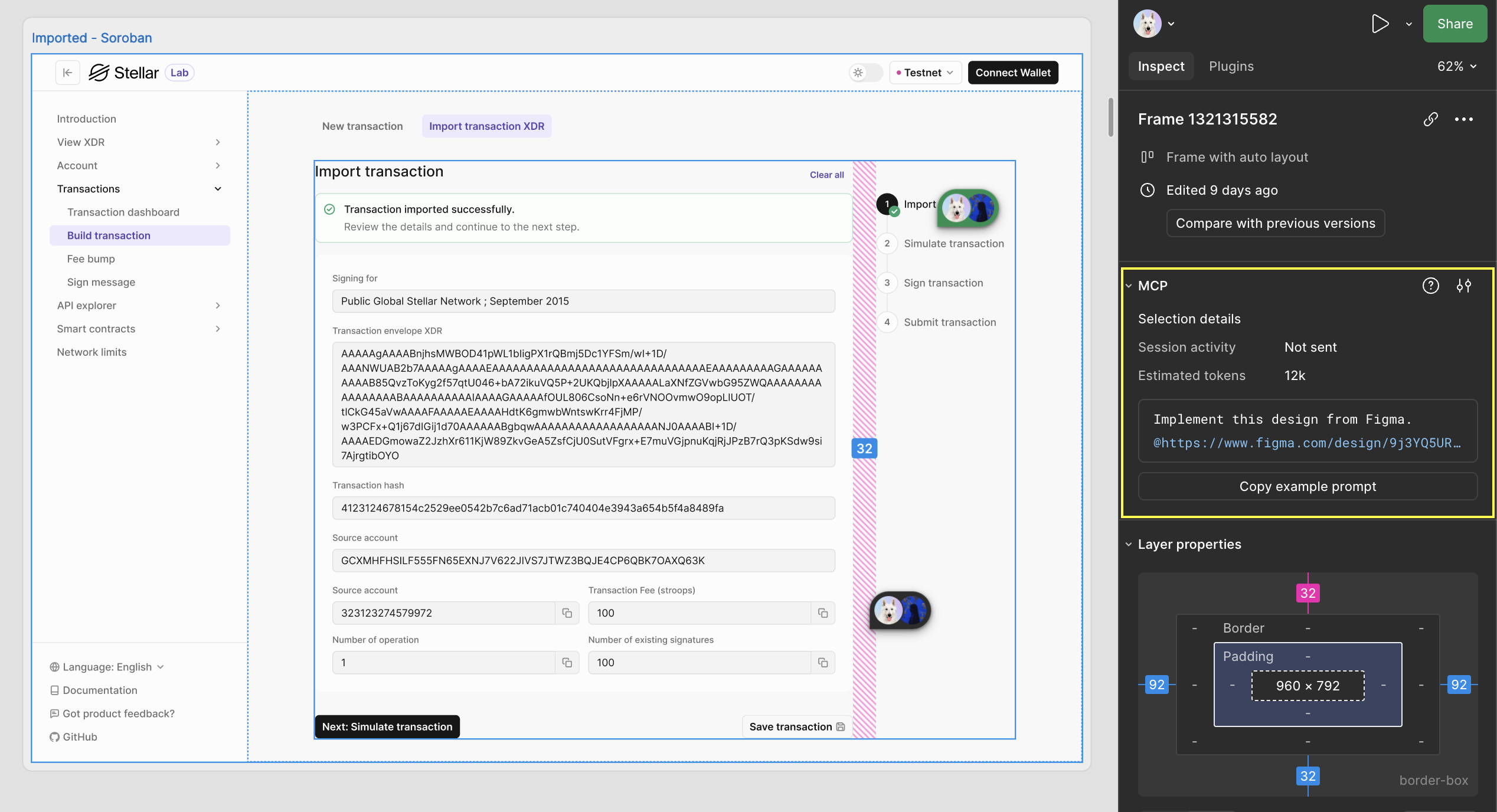

Since the company wide adoption of Claude Code (CC) in February, I have been experimenting with different tools to improve my CC developer experience for Stellar Lab, the all-in-one web dev tool to build, sign, simulate, and submit transactions and interact with contracts on the Stellar network. It came at perfect timing as I became a solo maintainer for Stellar Lab this year. Stellar Lab is built on NextJS, TanStack, React, Zustand, and etc. I also contribute to its backend, stellar/laboratory-backend.

My goal for Q1 was to find the best AI workflow to increase development velocity. CC has gotten significantly better than the first time I used it in the summer of 2025. Cursor was still my preferred developer tool back then since I preferred to intervene AI and CC wasn't good enough to be left alone. Much have changed since. CC has gotten significantly better and today, running multiple CC in parallel is the first thing I do in the morning at work.

Stellar Lab is a perfect guinea pig to integrate AI. It has extensive tests coverage so verifying AI changes is as easy as running tests at the end. Figma MCP improvements in Q1 2026 allows CC to scan the figma file and create components based on the design. It's not 100% perfect as you will see in the examples below; however, it has improved a lot since I first tried it in October of 2025. Our designer and I are working to improve this flow by adopting Code Connect since transferring design over to code is the major bottleneck of frontend development on Stellar Lab.

Stellar Lab's new backend integration taught me how to use AI to not only to implement the backend, but also learn it.

It's been an interesting Q1 as I integrated AI into Stellar Lab. It wasn't an easy process as it proved how much AI can do now. February was especially a stressful month for me. I felt very behind in terms of AI compared to AI enthusiasts on X. I couldn't help myself from comparing myself to them. It felt like the hot trends became obsolete in a matter of week. AI felt so open ended. I didn't know where to start then I slowly realized that I needed to stop comparing my usage of AI to others since everyone's project was different and AI calibrates differently depending on the project, all I had to do was to start and implement changes as I go. This shift in mindset improved my headspace and I no longer feel so stuck. This is to say what works for Stellar Lab might not work for your project.

Below are the tools I've used to improve AI developer experience.

git worktree to work on multiple features in parallel

git worktree is a Git command that allows you to have multiple working directories for the same repository simultaneously, with each directory on a different branch. This enables parallel development without the overhead of constantly stashing changes or cloning the entire repository multiple times.git worktree is my go to tool to run multiple CC to finish multiple tasks in parallel. The number of my PRs 5x thanks to git worktree. The command itself is straightforward to use; however, when running multiple worktres, it gets tedious to run the same commands over and over again to setup the project repo. I asked CC to create me a custom bash to streamline the git worktree workflow.

My custom script . worktree <branch name> from my dotfile repo does the following:

- It creates a worktree as sibling next to the main repo (if the repo is

~/Code/laboratory, worktrees go in~/Code/laboratory-{issue#}) - Copy

node_modulesusing copy-on-write, common untracked files - Run repo-specific setup

The command takes less than a minute to finish. I create multiple terminal windows, run . worktree command for corresponding issue tasks on github, then run CC to complete tasks in parallel.

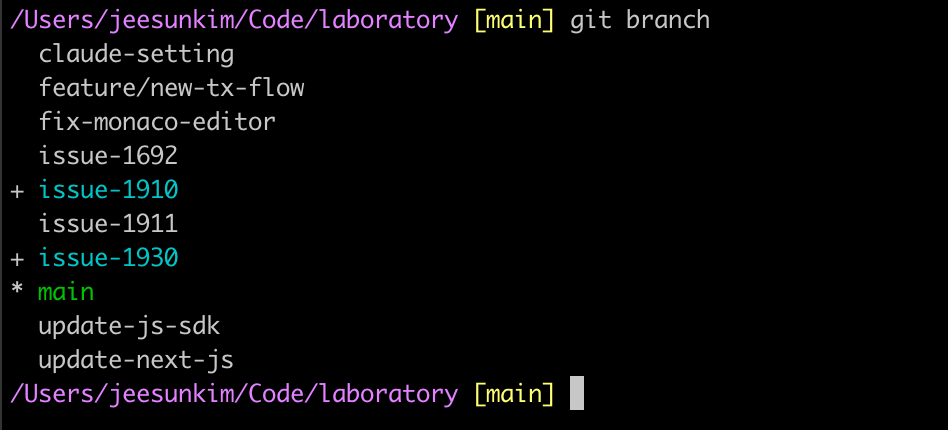

. worktree at work - notice the new repo assigned to a different branch createdIf you return to your main repo and run git branch, you'd see your worktree branch since git worktree shares the same .git database. When you want to remove a worktree branch, you need to run git worktree prune first before git branch -D

issue-1910 and issue-1930 are branches checked out by git worktreeCLAUDE.md and SKILL.md

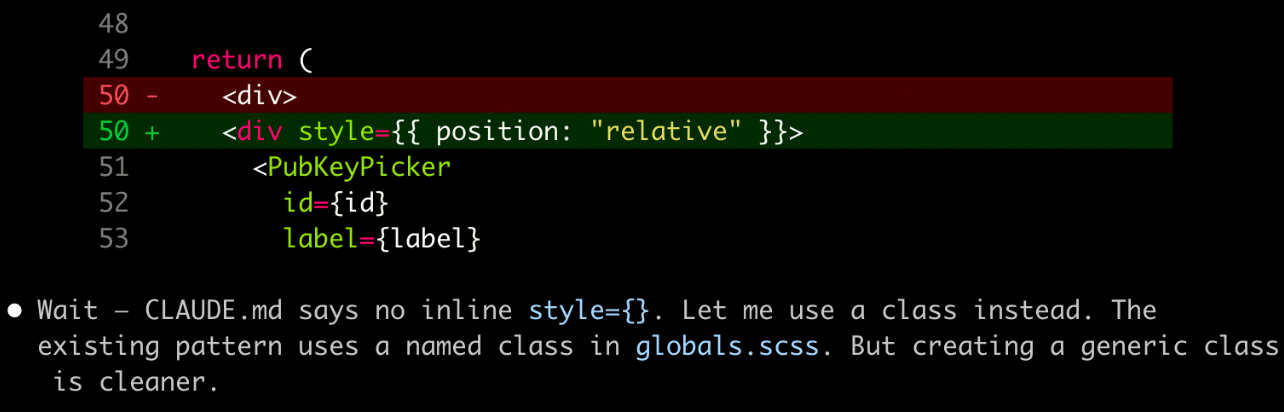

I created CLAUDE.md in February with co-pilot. It created a massive 557 lines of CLAUDE.md file. Since then, I've moved over domain specific content to .claude/skills: /component-with-design-system, /figma-to-code, /react-rerender-mental-models, and more to come. The general rule of thumb for CLAUDE.md is to keep it lean and only contain the information that will be used by CC every time such as project overview, tech stack (no need to go in depth on popular tech stacks since CC already knows about them), dev commands, and hard constraints.

I really liked JSDoc comments added for new functions addition in CLAUDE.md because it adds a reference to docs it used which helps both agents and human readers.

SKILL.md are skills that should only load when CC is actively working in that area. component-with-design-system/SKILL.md was the first skill I created to prevent CC from creating brand new components for existing and design system components.

SKILL.md file. This file includes metadata (name and description, at minimum) and instructions that tell an agent how to perform a specific task. Skills can also bundle scripts, templates, and reference materials — use the read_files frontmatter to auto-load them when the skill is invoked.Just like CLAUDE.md, it's also recommended to keep SKILL.md lean so I keep /references folder within each skill folder. For example:

component-with-design-system/SKILL.mdkeeps the conventions (do/don't), file structure patterns, and templates since those are procedural instructions (how to work).component-with-design-system/reference/sds-components.mdkeeps reference data on the full component catalog and all props patternsfigma-to-code/SKILL.mdkeeps the purpose, workflow steps, and common pitfalls for AI (for example, "don't copy literally" instructions).figma-to-code/reference/figma-mapping.mdholds all the information on mapping the figma elements to sds components, variables, and layout/responsive patternsreact-rerender-mental-models/SKILL.mdis importedSKILL.mdto prevent AI from over optimizing withuseMemoanduseCallbackthat could backfire

Writing design doc with CC & copilot in VScode

"Build transaction" on Stellar Lab is getting a facelift! The core functionality for building classic and soroban transactions remain the same. The new features are the navigation, a different way to persist data, and auth entries. I prompted CC to create a design doc by using the existing transaction flow as base, come up with a plan to integrate auth entry signature into the existing simulate transaction flow and to replace zustand-querystring with session storage. Starting the doc was easy, but editing and removing the redundant content to make it easier for humans to read took longer than I expected. I did the majority of editing in VScode as I found side by side feature much easier to work in.

New transaction flow doc is the final outcome of the design doc with CC. I liked how thorough it was and it broke down features into phases which came in handy later when I scoped the project into tasks. I was impressed by its ability to scan new design features via Figma MCP. I will go more in depth on Figma MCP later.

Reviewing the design document with Agent Team

After finishing the design doc with CC, I was happy with the result. By conversing and asking questions back and forth with CC on the doc, it helped me understand the features more, figuring out the timeline, and prioritizing tasks. I was still uncertain about our approach on saving the state in session storage so I created agent teams made of UX team, tech architect, and devil's advocate for feedback on session storage state.

At the time of this writing, you have to enable agent teams in .claude's settings to run it.

// settings.local.json

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}.claude

I ran it in terminal's split windows to see the changes in action. It suggested beforeunload warning as a UX enhancement when working with the fields in the new transaction flow.

`beforeunload" warning: when the flow store has unsaved state (i.e., the user has progressed past the initial step or modified build params), closing or navigating away from the tab triggers a browser confirmation dialog. This protects against accidental tab close (cmd+w), browser crash recovery prompts, and mobile tab kills.

.claude directory, you can find an inbox directory with files that save some communication between themThings I want to try:

- Add a skill or hook that would publish changes to the github on its own

Figma MCP

I still a desktop version of the MCP server for Stellar Lab. Figma's official recommendation is to use their remote server; however, I was an early tester for Figma MCP back in September 2025 and it used a desktop version then. Desktop version works fine so I haven't felt the need to change it to the remote server.

I use Figma MCP to:

- Generate code from selected frames: Select a Figma frame and turn it into code. This is handy when building a new feature or writing a design doc

- Extract design context: Pull in variables, components, and layout data directly into your IDE. This is especially useful for design systems and component-based workflows.

I have yet to try creating/modifying native Figma content directly from MCP client. This is on my high to do list for my personal project.

component-with-design-system/SKILL.md and figma-to-code/SKILL.md come in handy with our current Figma MCP setting. It reinforces CC to reuse existing and design system components. However, once our Figma implements Code Connect, which connects components in codebase directly to components in Figma design, these skills might go obsolete.

We are going to implement Code Connect with Stellar Design System first, which Stellar Lab relies on, then Stellar Lab.

Does CC's output perfectly match Figma design? No, but it's pretty darn close.